Cooked.

AI is here now, and like every tool that expands access, it has triggered a familiar kind of panic.

Designers are asking whether their jobs are at risk. Whether the work will disappear. Whether something essential is being lost. These questions feel new, but the structure of them isn’t. We’ve seen this before with the Macintosh, with Adobe InDesign, with Canva. We ask questions about if brand designers should draw type. Each time, the boundary between professional and amateur shifts, and each time, the response is the same.

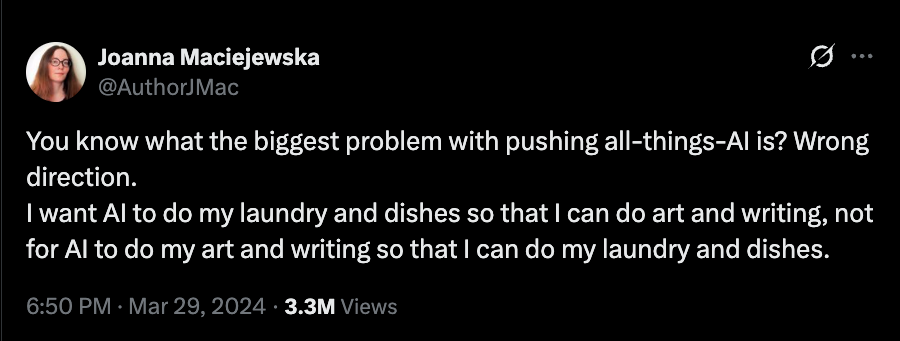

The current conversation around AI follows that pattern almost exactly. There’s talk of “real” creativity; underneath it lies a shared assumption that what is being threatened is something uniquely human, something that cannot and should not be replicated. And there is something to that.

Because if the defence of design rests on the idea that our work is fundamentally different from the systems now producing it, then we have to be honest about how that work has always been made. Designers reference, borrow, reinterpret, and remix constantly. The difference has never been purity. It has been taste, context, and control.

So when AI produces something that looks familiar, the discomfort isn’t just about the output. It’s about proximity. The distance between professional practice and general access is collapsing again, and with it, the idea that the ability to produce visual form is what defines the designer.

That is why the current backlash feels so intense. Not because the technology is unprecedented, but because it forces a more uncomfortable question: if design is not defined by the act of making in and of itself, then what exactly are we defending?

If the first instinct is to defend design on the grounds of creativity, then it’s worth examining what that actually means in practice.

Most arguments against AI settle here. That what designers do is inherently creative, and that this creativity is what separates professional work from automated output. The implication is that there is something essential in the act of making—something intuitive, expressive, and essentially human—that cannot be reproduced through pattern recognition.

But design has never been as self-contained as that argument suggests.

The discipline is built on reference. On the ability to recognise what has come before and reframe it for a new context. Designers are trained to look outward, to archives, movements, other designers (we especially love the dead ones), and to build from there. It is not a flaw in the system. It is the system. The difference has never been originality in a pure sense.

This is what makes the current line of criticism difficult to honour in good faith. The use of existing work, the recombination of known forms, and the reliance on large bodies of visual data have always been fundamental to how designers have always operated. Large language models are trained on vast amounts of existing work—not tremendously unlike your Flickr collection of Swiss design and Letraset scans.

That does not mean the comparison is clean, or that the ethical questions disappear. It means that “creativity” on its own is not a stable boundary. If that boundary doesn’t hold, then the argument has to move elsewhere. The ethical position is where the critique of AI feels strongest.

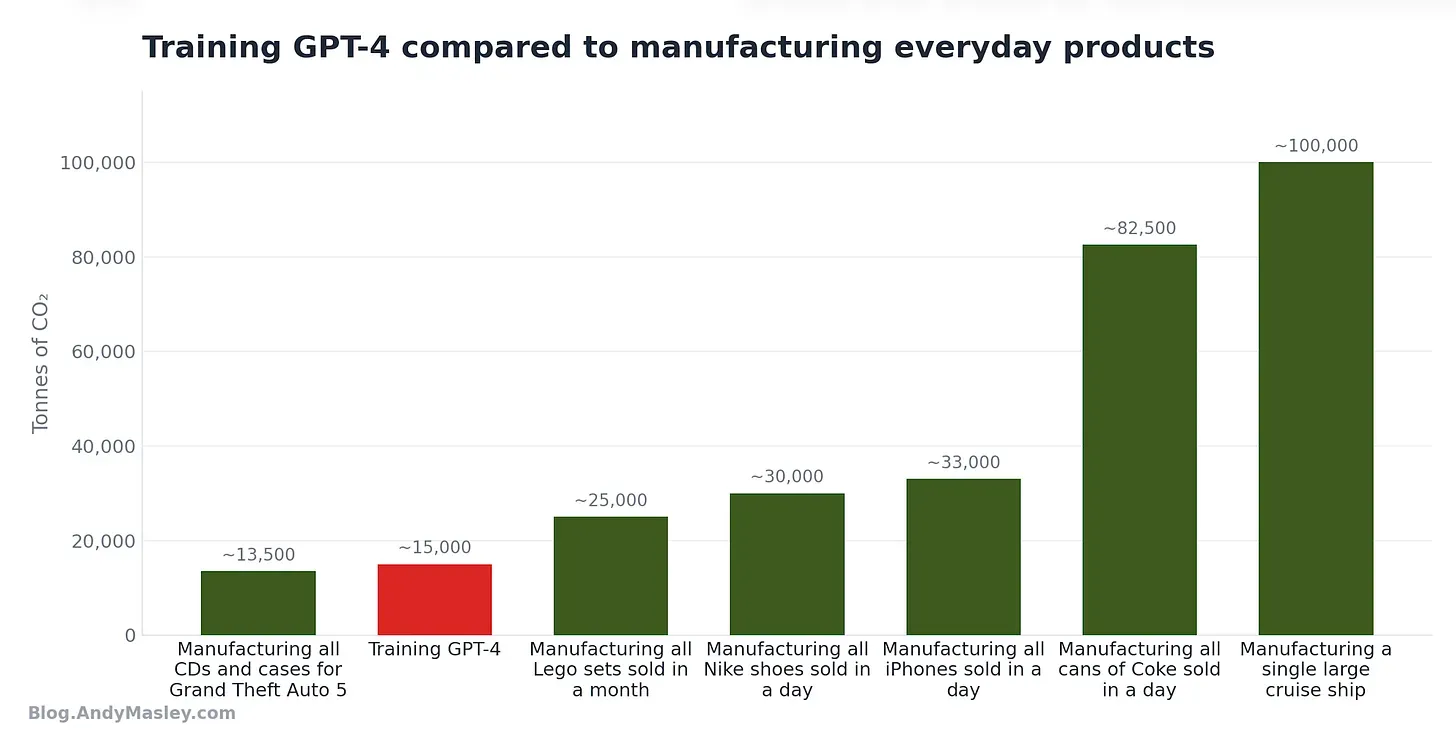

So we know that LLMs are trained on existing work done by humans, and use tremendous amounts of natural resources at a high environmental cost—much of it produced without consent or fair compensation. That raises real questions about ownership, attribution, and the conditions under which these systems are built.

Because design, as an industry, has never operated outside of compromised systems. The tools we use, the clients we work with, and the platforms we publish on are all embedded in structures that extract, exploit, or externalise harm in one way or another.

What is new is the clarity: AI makes the mechanisms more visible, and therefore easier to point to and reject. But rejecting one tool does not resolve the structure it operates within.

If the ethical stance is that designers should not engage with systems built on extraction, then that position would have to extend much further than AI. It would have to account for the broader ecosystem of technology, media, and commerce that design already depends on. In practice, that consistency is difficult to maintain, and most people don’t attempt to. We can reject AI for its involvement in extractive systems, and still work for institutions shaped by those same systems, as long as they remain culturally legitimate and cool enough for the portfolio.

So, the moral argument ends up being selective, and uneven in application. What matters more is not whether AI is ethical in isolation, but how designers relate to the systems that make its use possible.

At this point, it’s worth being precise about what we’re talking about. What is currently described as “AI” in practice is not intelligence in any meaningful sense. These are systems trained on large datasets to recognise patterns and generate outputs that resemble what they have seen. They do not understand context, intention, or consequence. They predict and assemble. This distinction matters because it shifts the conversation away from replacement and toward function. AI does not decide to act. It is deployed.

Which means the question is not what AI is capable of in isolation, but what it is being used to do, and by whom. Framed this way, the conversation becomes less speculative and more familiar. These are tools designed to increase efficiency, reduce cost, and scale production. That is not a radical departure from previous technologies. It is a continuation of the same logic that has shaped design’s relationship to industry for as long as it has existed; and to some extent, it’s the visual systems we’ve been building—custom geometric sans, ambitious branding, and killer copy—that made this transition possible.

If the logic holds, then the trajectory is not difficult to predict. AI will be adopted wherever it makes processes faster, cheaper, or more scalable. Not because it produces better work, but because it produces acceptable work at a lower cost. That has always been enough. The question of whether designers approve of AI is largely irrelevant to whether it will be used. The decision will be made at the level of institutions, where efficiency outweighs sentiment, and where design is treated as a function rather than a discipline.

We have seen this before. Some roles disappear. Photo retouchers are fewer in number now than they were before the latest edition of Photoshop. Some jobs change. New ones emerge. The centre of gravity moves.

What is different now is not the existence of the pattern, but the speed at which it is unfolding, and the scale at which it can be applied. This is where the conversation tends to misidentify the problem. The primary risk is not that AI will eliminate designers altogether. It is that it will redefine the terms under which design labour is valued.

When production becomes easier to automate, the perceived value of execution decreases. When outputs can be generated quickly, the expectation of speed increases. The designer’s role is pushed further away from authorship and closer to operation.

In that shift, something more subtle is at stake: agency. Who decides what gets made? Who controls how it is used? Who owns the output, and who benefits from it? These questions have always existed, but AI intensifies them by concentrating capability within systems that designers do not control. If the response to AI is limited to rejecting the tool, then those questions remain unaddressed. The work continues to flow through the same channels, under the same conditions, with or without us. What we’re scared of isn’t AI taking our jobs; what we’re scared of is AI making our work less special.

Which brings the conversation back to where it should have started.

The issue is not whether AI is good or bad, or whether designers choose to use it. The issue is how design, as a practice, relates to the systems that deploy it. Tools change. They always have. What persists is the structure in which those tools are embedded: the movement of capital, the consolidation of platforms, the distribution of control.

There are ways to engage with these systems that preserve a degree of agency. They are not always obvious, and they are not always easy. They require a different relationship to tools, to distribution, and to ownership. Most of the current conversation does not reach that point.

If there is a way forward, it will not come from deciding whether to use AI or not. We can't reduce a structural shift to personal choice. What matters is understanding the system that “AI” operates within, not blindly avoiding it—and deciding where, within that system, you are willing to stand.